Your student studied everything on the syllabus. They revised every topic, practised every concept, and walked into the exam feeling prepared. They read the question, recognised the topic immediately, and wrote everything they knew about it.

The response sheet comes back after evaluation. The score is 11 out of 20. The student comes to you confused.

“But I knew the answer. I wrote about it. Why did I lose so many marks?”

Here is the uncomfortable part. When you read the answer, the student was not entirely wrong. The knowledge was there. But something else was missing. Something the question expected that the student never knew to give.

This situation happens in classrooms everywhere, every semester. And most of the time, neither the student nor the educator has a clear conversation about why.

Understanding this gap is more important than most educators realise. Because the issue is rarely just about knowledge. It is about how that knowledge is supposed to be demonstrated during an exam, and whether anyone ever told the student what that actually looks like.

Exams Contain Hidden Expectations

Every exam question contains two layers. The first layer is visible. It is the topic, the subject matter, the content students are expected to know. Students study this. They prepare for it. They usually recognise it the moment they see the question.

The second layer is often invisible to students. It is the expectation of how the answer should be structured, what type of reasoning is required, and what the examiner is actually looking for during grading.

This second layer is where most marks are lost. When a question says “analyse,” it is not asking for a definition. It is asking the student to break something down and explain how the parts relate to each other. When a question says “evaluate,” it expects a judgement supported by reasoning, not just a description.

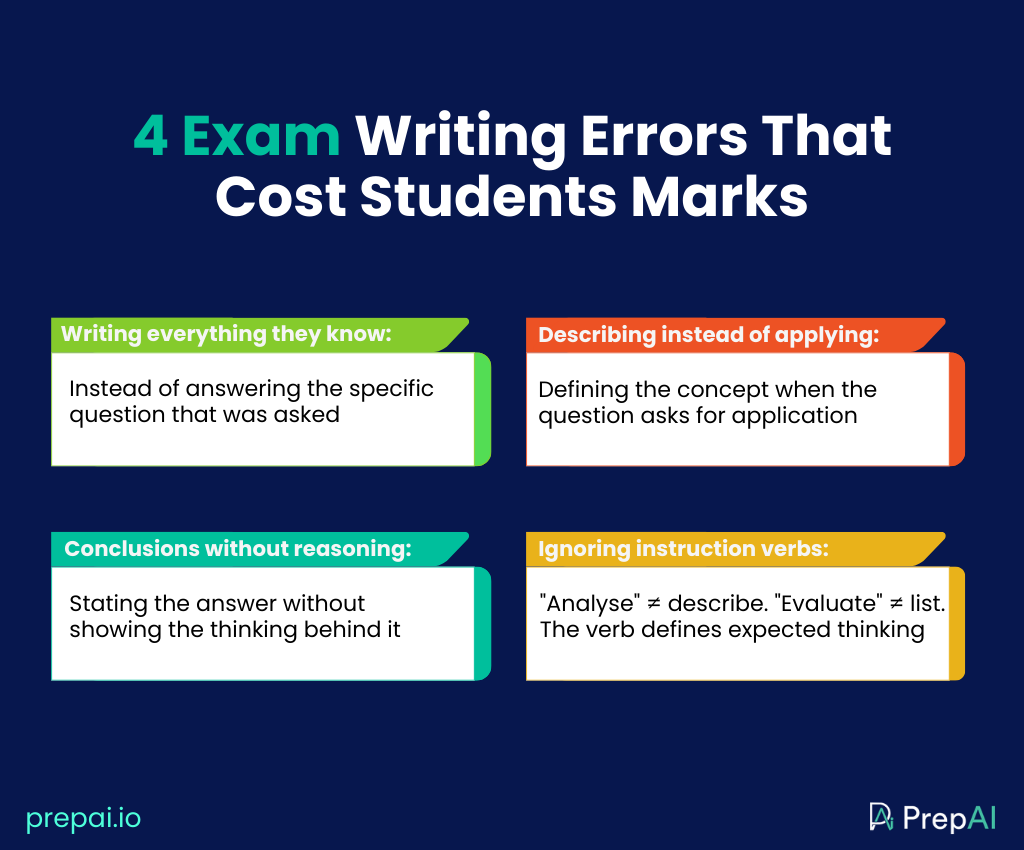

These distinctions are critical for skill-based assessment. Common exam writing errors that consistently cost students marks includes:

- writing everything they know about a topic instead of answering the specific question

- describing a concept instead of applying it

- presenting conclusions without showing the reasoning behind them

- ignoring the instructions that define the expected type of thinking.

These issues are not about effort. Most students making these mistakes are not underprepared. They simply cannot see the hidden expectations built into how the question was written.

This is where AI and assessment in education starts to become genuinely relevant. Not just as a tool to generate questions faster, but as a way to help educators design questions whose expectations are visible from the start. AI-based assessment tools like PrepAI allow educators to build questions aligned to specific Bloom’s Taxonomy levels, so the cognitive expectation is defined before the question ever reaches a student.

Why Students Struggle With This

Here is something worth understanding about how most students prepare for exams.

They study content. They re-read notes, memorise definitions, highlight textbooks, and practise questions from previous papers. This is useful. But it only prepares them for the first visible layer of the exam, the topic and the subject matter.

Nobody explicitly teaches them the second layer.

The conventions of exam writing, what “analyse” means in practice, how much reasoning is expected, when to argue versus when to describe, are things experienced educators understand intuitively after years in academia. They can feel so obvious that they go unsaid.

But to a student sitting their exams, these expectations are not obvious at all. From the student’s perspective the task feels straightforward. Recognise the topic, write everything they know about it, and if the content is correct the marks should follow.

So exams are not only about evaluating knowledge. They are evaluating how that knowledge is structured, explained, and connected to the exact question being asked.

This is not a laziness problem or a preparation problem. It is a communication problem. Students are not shown what a strong answer actually looks like, so they write what feels safe and hope they will get good marks.

Why Educators Notice This During Grading

Ask any educator who has graded a large batch of response sheets and they will describe the same pattern.

Many students who lose marks clearly know the topic. The issue is not missing knowledge. The issue is that the answer does not respond to the question in the way it was intended.

One educator in the PrepAI Community captured this honestly. After results came back, the educator posted:

“Three students scored below 15 out of 50. I am trying to understand what I missed.”

Not “what did the students miss” but “what did I miss.” That shift in perspective is important. Because often, when students consistently underperform on a specific question, the problem is not the students. It is the question itself, and the hidden expectation that was never made visible.

During grading this pattern becomes obvious quickly because students describe when the question required analysis. They present information when the question expects an argument. They write about the topic without addressing the specific angle that was asked for.

But by the time the educator recognises this, the exam is already over. The marks are already lost. This is one of the most consistent and most preventable assessment design challenges in education. And it starts with how the question was written, not how the student studied.

How Educators Can Reduce This Problem

The gap between what students know and what they score is not inevitable. In many cases it comes down to how assessments are designed and communicated.

Design questions that ask for one clear type of thinking. Questions that combine multiple instructions in a single prompt create confusion about which one to prioritise. Focused question design reduces the chance of students answering the wrong version of what was asked.

Use an AI question generator for teachers to build better questions from the start. PrepAI allows educators to generate structured questions directly from their own teaching material, including lecture notes, topic inputs, and curriculum content, with Bloom’s Taxonomy levels built in from the beginning. Instead of accidentally writing a recall question when the intention was to write an analysis question, PrepAI helps align what needs to be tested with how the question is actually written. This reduces hidden expectations before they are ever built into the paper.

Review question wording before the exam goes live. A question that seems clear to the author can read as ambiguous to someone encountering it without the same context. This is where the PrepAI Community becomes directly useful. Educators share their draft assessments with peers who read the questions without the author’s assumptions, the way a student will read them, and give honest feedback on whether the expectations are clear, fair, and testing what they are supposed to test.

How PrepAI and the PrepAI Community Work Together

Reducing the expectation gap requires two things working together. Better question design from the start, and honest review before the paper reaches students.

PrepAI handles the design side. By generating questions from your own course material with specific cognitive levels built in, the expectation stops being hidden and becomes part of the structure of the question itself.

The PrepAI Community handles the review side. Educators share real assessment drafts and get structured feedback from peers who understand both the subject and the challenge of designing questions that test genuine understanding rather than surface recall.

A physics educator shared a draft and asked honestly whether her questions were testing physics understanding or just formula recall. A science educator posted a Human Microbiome paper and asked whether it was truly showing the complexity of the topic or just rewarding familiarity with common examples. Both found something through peer review that solo revision had not revealed, because another perspective catches what the original author cannot see alone.

Every educator who contributes to the PrepAI Community, sharing drafts, reviewing peers, and refining questions, is building something beyond a single better exam. They are building a visible professional reputation based on the quality of their thinking. Being seen by peers who engage seriously with their work. Being trusted for the care they put into assessment design. Being recognised as an educator who takes the craft of teaching as seriously as the subject itself.

The Gap Is Fixable

Going back to that student who scored 11 out of 20.

They knew the answer. The knowledge was genuinely there. What was missing was an understanding of how to demonstrate it in the way the question expected, and a question clear enough to give them a fair chance to do so.

That gap did not have to exist.

Clearer questions. Explicit expectations. Honest peer review before the paper goes live. These are not complicated changes. They are small deliberate decisions that make an enormous difference to every student sitting in the exam hall trying to show what they know.

PrepAI helps educators design questions that say what they mean. The PrepAI Community helps educators catch what they cannot see alone.

Share your next assessment draft at PrepAI Community